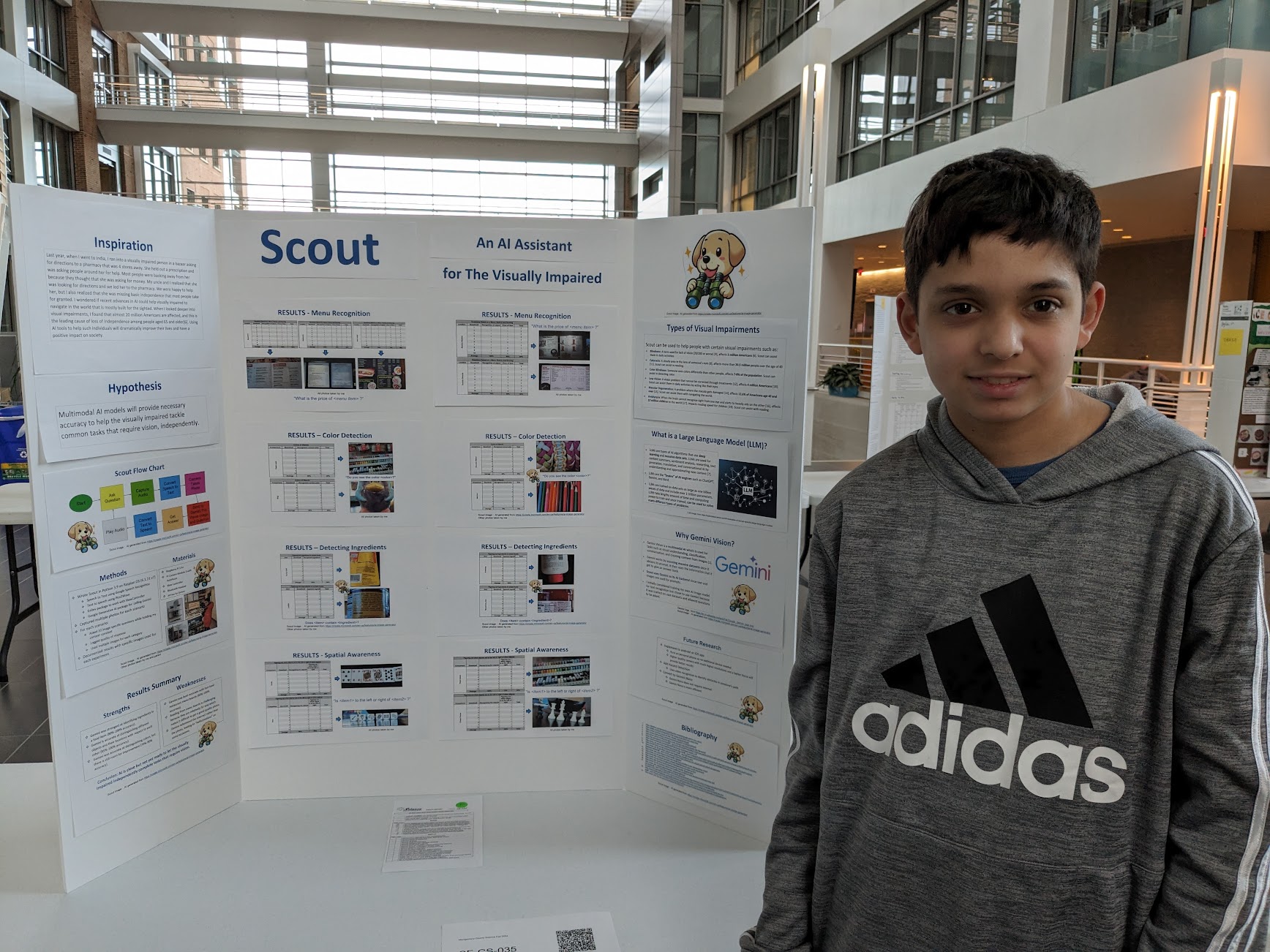

Overview

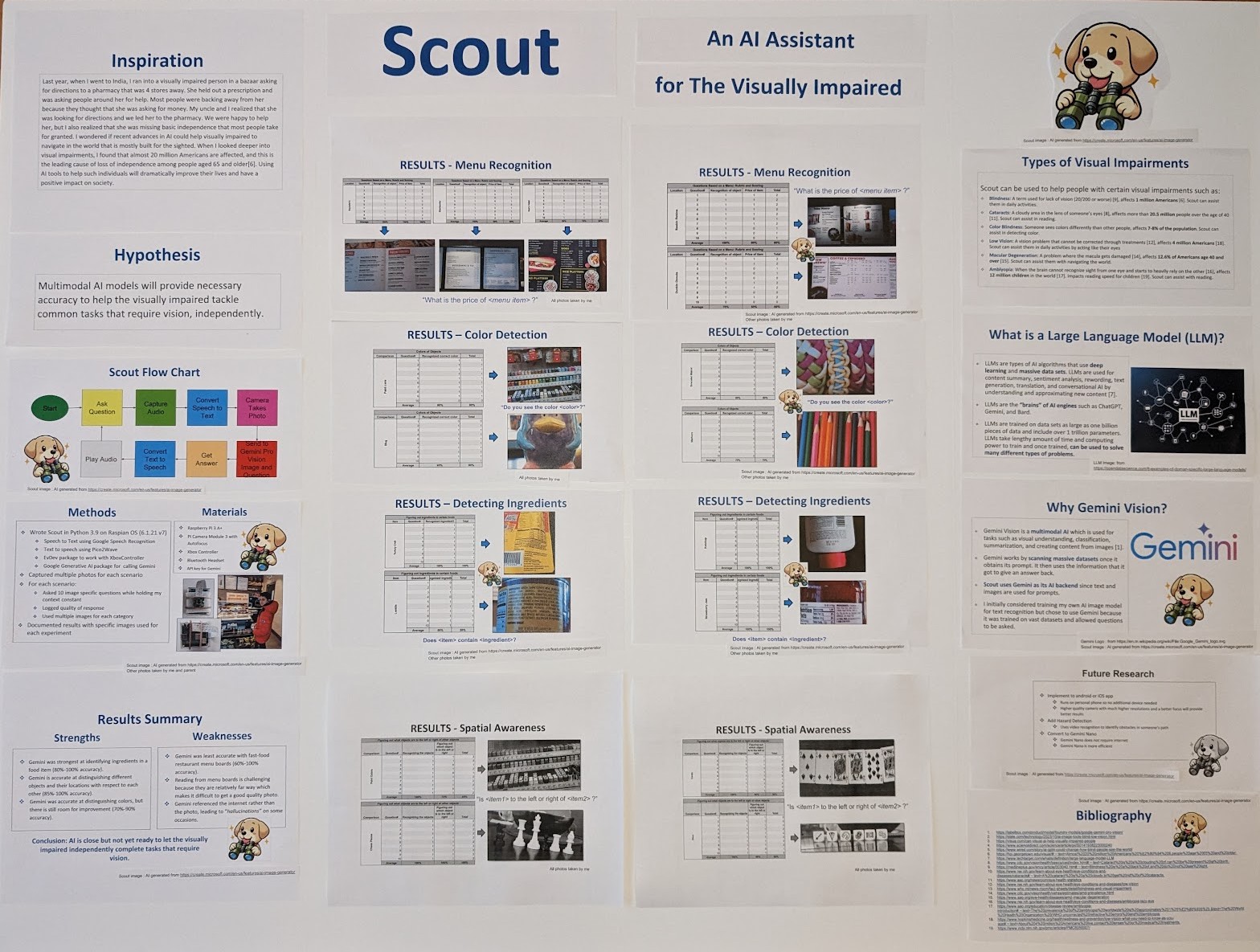

People with visual impairments still face everyday navigation challenges, like finding a specific store in a strip mall or reading a printed bus schedule. Multimodal generative AI models such as Gemini Vision, which can understand and reason over images and text together, create an opportunity to turn complex visual scenes into clear, spoken guidance. This project explores how Gemini Vision can power an assistant that helps visually impaired users navigate the world more independently.

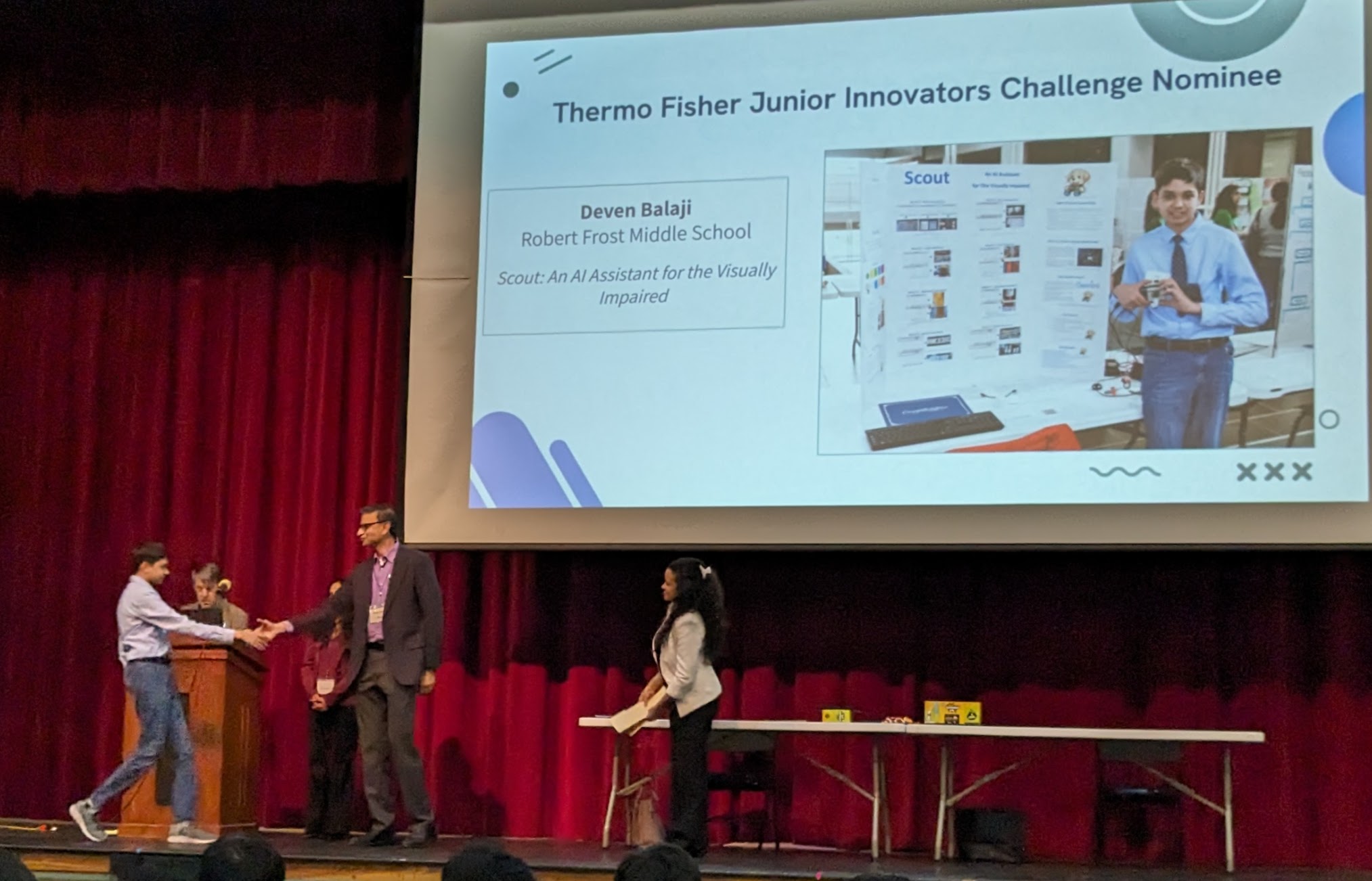

Awards & Recognition

- 1st Place – ScienceMontgomery Science Fair, Middle School Computer Science Division

- Top 300 of 1,862 – National ThermoFisher Junior Innovators Challenge

How It Works

Gemini Vision can take images or live camera frames as input and perform tasks such as describing the scene, classifying objects, reading and summarizing text, and answering questions about what it "sees" (for example, "Which storefront is the pharmacy?" or "When is the next bus listed on this schedule?"). The assistant would capture what is in front of the user via a camera, send that visual input to Gemini Vision along with the user's question, and then convert the model's response into clear audio instructions that guide the user: turn by turn, step by step, or in concise summaries of visual information.

Technology Stack

Real-World Testing

I ran 10 trials each for menu-reading scenarios across 5 situations, spatial-awareness scenarios across 4 situations, color-identification scenarios across 4 situations, and ingredient-list scenarios across 4 situations.

What I Learned

I learned how to integrate hardware and software in a real-world assistive system, dealing with sensor limits, latency, and reliability on embedded devices. Working with AI APIs taught me to manage auth, rate limits, and errors while also writing prompts so vision models give effective, useful answers. I also deepened my understanding of accessibility principles and how to present complex systems clearly and impact-first at science fairs.

Gallery